问题导读

1.如何进入spark shell?

2.spark shell中如何加载外部文件?

3.spark中读取文件后做了哪些操作?

about云日志分析,那么过滤清洗日志。该如何实现。这里参考国外的一篇文章,总结分享给大家。

使用spark分析网站访问日志,日志文件包含数十亿行。现在开始研究spark使用,他是如何工作的。几年前使用hadoop,后来发现spark也是容易的。

下面是需要注意的:

如果你已经知道如何使用spark并想知道如何处理spark访问日志记录,我写了这篇短的文章,介绍如何从Apache访问日志文件中生成URL点击率的排序

安装

spark安装需要安装hadoop,并且二者版本要合适。安装可参考下面文章

about云日志分析项目准备6:Hadoop、Spark集群搭建

http://www.aboutyun.com/forum.php?mod=viewthread&tid=20620

进入

[mw_shl_code=bash,true]./bin/spark-shell[/mw_shl_code]

可能会出错[mw_shl_code=bash,true]java.io.FileNotFoundException: File file:/data/spark_data/history/event-log does not exist

[/mw_shl_code]

解决办法:

[mw_shl_code=bash,true]mkdir -p /data/spark_data/history/event-log[/mw_shl_code]

详细错误如下

[mw_shl_code=bash,true]17/10/08 17:00:23 INFO client.AppClient$ClientEndpoint: Executor updated: app-20171008170022-0000/1 is now RUNNING

17/10/08 17:00:25 ERROR spark.SparkContext: Error initializing SparkContext.

java.io.FileNotFoundException: File file:/data/spark_data/history/event-log does not exist

at org.apache.hadoop.fs.RawLocalFileSystem.deprecatedGetFileStatus(RawLocalFileSystem.java:534)

at org.apache.hadoop.fs.RawLocalFileSystem.getFileLinkStatusInternal(RawLocalFileSystem.java:747)

at org.apache.hadoop.fs.RawLocalFileSystem.getFileStatus(RawLocalFileSystem.java:524)

at org.apache.hadoop.fs.FilterFileSystem.getFileStatus(FilterFileSystem.java:409)

at org.apache.spark.scheduler.EventLoggingListener.start(EventLoggingListener.scala:100)

at org.apache.spark.SparkContext.<init>(SparkContext.scala:549)

at org.apache.spark.repl.SparkILoop.createSparkContext(SparkILoop.scala:1017)

at $line3.$read$$iwC$$iwC.<init>(<console>:15)

at $line3.$read$$iwC.<init>(<console>:24)

at $line3.$read.<init>(<console>:26)

at $line3.$read$.<init>(<console>:30)

at $line3.$read$.<clinit>(<console>)

at $line3.$eval$.<init>(<console>:7)

at $line3.$eval$.<clinit>(<console>)

at $line3.$eval.$print(<console>)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:497)

at org.apache.spark.repl.SparkIMain$ReadEvalPrint.call(SparkIMain.scala:1065)

at org.apache.spark.repl.SparkIMain$Request.loadAndRun(SparkIMain.scala:1346)

at org.apache.spark.repl.SparkIMain.loadAndRunReq$1(SparkIMain.scala:840)

at org.apache.spark.repl.SparkIMain.interpret(SparkIMain.scala:871)

at org.apache.spark.repl.SparkIMain.interpret(SparkIMain.scala:819)

at org.apache.spark.repl.SparkILoop.reallyInterpret$1(SparkILoop.scala:857)

at org.apache.spark.repl.SparkILoop.interpretStartingWith(SparkILoop.scala:902)

at org.apache.spark.repl.SparkILoop.command(SparkILoop.scala:814)

at org.apache.spark.repl.SparkILoopInit$$anonfun$initializeSpark$1.apply(SparkILoopInit.scala:125)

at org.apache.spark.repl.SparkILoopInit$$anonfun$initializeSpark$1.apply(SparkILoopInit.scala:124)

at org.apache.spark.repl.SparkIMain.beQuietDuring(SparkIMain.scala:324)

at org.apache.spark.repl.SparkILoopInit$class.initializeSpark(SparkILoopInit.scala:124)

at org.apache.spark.repl.SparkILoop.initializeSpark(SparkILoop.scala:64)

at org.apache.spark.repl.SparkILoop$$anonfun$org$apache$spark$repl$SparkILoop$$process$1$$anonfun$apply$mcZ$sp$5.apply$mcV$sp(SparkILoop.scala:974)

at org.apache.spark.repl.SparkILoopInit$class.runThunks(SparkILoopInit.scala:159)

at org.apache.spark.repl.SparkILoop.runThunks(SparkILoop.scala:64)

at org.apache.spark.repl.SparkILoopInit$class.postInitialization(SparkILoopInit.scala:108)

at org.apache.spark.repl.SparkILoop.postInitialization(SparkILoop.scala:64)

at org.apache.spark.repl.SparkILoop$$anonfun$org$apache$spark$repl$SparkILoop$$process$1.apply$mcZ$sp(SparkILoop.scala:991)

at org.apache.spark.repl.SparkILoop$$anonfun$org$apache$spark$repl$SparkILoop$$process$1.apply(SparkILoop.scala:945)

at org.apache.spark.repl.SparkILoop$$anonfun$org$apache$spark$repl$SparkILoop$$process$1.apply(SparkILoop.scala:945)

at scala.tools.nsc.util.ScalaClassLoader$.savingContextLoader(ScalaClassLoader.scala:135)

at org.apache.spark.repl.SparkILoop.org$apache$spark$repl$SparkILoop$$process(SparkILoop.scala:945)

at org.apache.spark.repl.SparkILoop.process(SparkILoop.scala:1059)

at org.apache.spark.repl.Main$.main(Main.scala:31)

at org.apache.spark.repl.Main.main(Main.scala)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:497)

at org.apache.spark.deploy.SparkSubmit$.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:731)

at org.apache.spark.deploy.SparkSubmit$.doRunMain$1(SparkSubmit.scala:181)

at org.apache.spark.deploy.SparkSubmit$.submit(SparkSubmit.scala:206)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:121)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

java.lang.NullPointerException

at org.apache.spark.sql.SQLContext$.createListenerAndUI(SQLContext.scala:1367)

at org.apache.spark.sql.hive.HiveContext.<init>(HiveContext.scala:101)

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:422)

at org.apache.spark.repl.SparkILoop.createSQLContext(SparkILoop.scala:1028)

at $iwC$$iwC.<init>(<console>:15)

at $iwC.<init>(<console>:24)

at <init>(<console>:26)

at .<init>(<console>:30)

at .<clinit>(<console>)

at .<init>(<console>:7)

at .<clinit>(<console>)

at $print(<console>)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:497)

at org.apache.spark.repl.SparkIMain$ReadEvalPrint.call(SparkIMain.scala:1065)

at org.apache.spark.repl.SparkIMain$Request.loadAndRun(SparkIMain.scala:1346)

at org.apache.spark.repl.SparkIMain.loadAndRunReq$1(SparkIMain.scala:840)

at org.apache.spark.repl.SparkIMain.interpret(SparkIMain.scala:871)

at org.apache.spark.repl.SparkIMain.interpret(SparkIMain.scala:819)

at org.apache.spark.repl.SparkILoop.reallyInterpret$1(SparkILoop.scala:857)

at org.apache.spark.repl.SparkILoop.interpretStartingWith(SparkILoop.scala:902)

at org.apache.spark.repl.SparkILoop.command(SparkILoop.scala:814)

at org.apache.spark.repl.SparkILoopInit$$anonfun$initializeSpark$1.apply(SparkILoopInit.scala:132)

at org.apache.spark.repl.SparkILoopInit$$anonfun$initializeSpark$1.apply(SparkILoopInit.scala:124)

at org.apache.spark.repl.SparkIMain.beQuietDuring(SparkIMain.scala:324)

at org.apache.spark.repl.SparkILoopInit$class.initializeSpark(SparkILoopInit.scala:124)

at org.apache.spark.repl.SparkILoop.initializeSpark(SparkILoop.scala:64)

at org.apache.spark.repl.SparkILoop$$anonfun$org$apache$spark$repl$SparkILoop$$process$1$$anonfun$apply$mcZ$sp$5.apply$mcV$sp(SparkILoop.scala:974)

at org.apache.spark.repl.SparkILoopInit$class.runThunks(SparkILoopInit.scala:159)

at org.apache.spark.repl.SparkILoop.runThunks(SparkILoop.scala:64)

at org.apache.spark.repl.SparkILoopInit$class.postInitialization(SparkILoopInit.scala:108)

at org.apache.spark.repl.SparkILoop.postInitialization(SparkILoop.scala:64)

at org.apache.spark.repl.SparkILoop$$anonfun$org$apache$spark$repl$SparkILoop$$process$1.apply$mcZ$sp(SparkILoop.scala:991)

at org.apache.spark.repl.SparkILoop$$anonfun$org$apache$spark$repl$SparkILoop$$process$1.apply(SparkILoop.scala:945)

at org.apache.spark.repl.SparkILoop$$anonfun$org$apache$spark$repl$SparkILoop$$process$1.apply(SparkILoop.scala:945)

at scala.tools.nsc.util.ScalaClassLoader$.savingContextLoader(ScalaClassLoader.scala:135)

at org.apache.spark.repl.SparkILoop.org$apache$spark$repl$SparkILoop$$process(SparkILoop.scala:945)

at org.apache.spark.repl.SparkILoop.process(SparkILoop.scala:1059)

at org.apache.spark.repl.Main$.main(Main.scala:31)

at org.apache.spark.repl.Main.main(Main.scala)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:497)

at org.apache.spark.deploy.SparkSubmit$.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:731)

at org.apache.spark.deploy.SparkSubmit$.doRunMain$1(SparkSubmit.scala:181)

at org.apache.spark.deploy.SparkSubmit$.submit(SparkSubmit.scala:206)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:121)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

<console>:16: error: not found: value sqlContext

import sqlContext.implicits._

^

<console>:16: error: not found: value sqlContext

import sqlContext.sql

^

[/mw_shl_code]

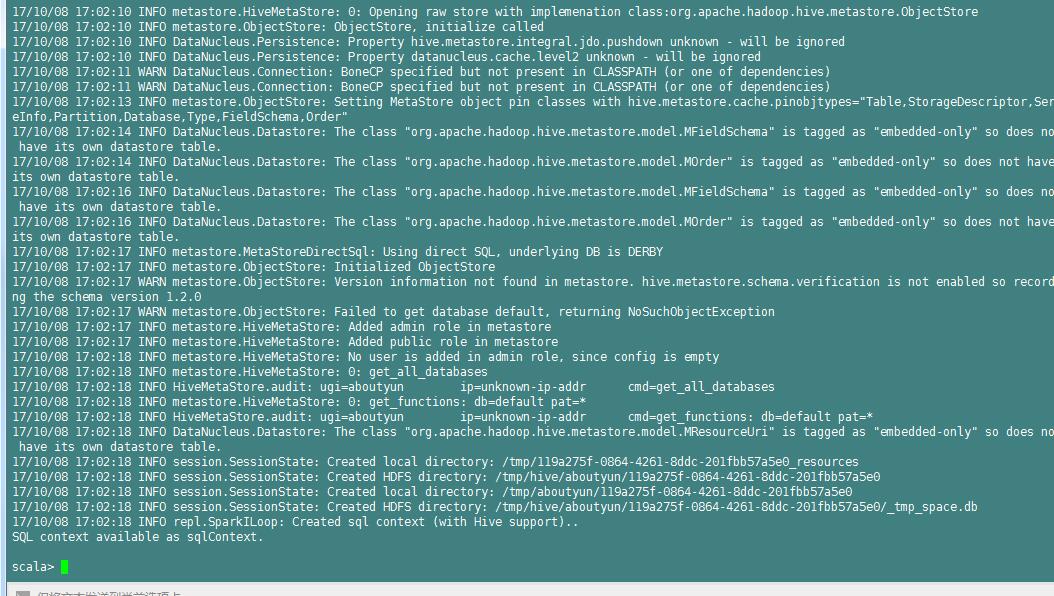

进入spark shell

[mw_shl_code=bash,true]val textFile=sc.textFile("file:///data/spark/README.md")

[/mw_shl_code]说明:

记得这里如果自己创建的文件可能会读取不到。报错如下

[mw_shl_code=bash,true] java.io.FileNotFoundException: File file:/data/spark/change.txt does not exist

at org.apache.hadoop.fs.RawLocalFileSystem.deprecatedGetFileStatus(RawLocalFileSystem.java:534)

at org.apache.hadoop.fs.RawLocalFileSystem.getFileLinkStatusInternal(RawLocalFileSystem.java:747)

at org.apache.hadoop.fs.RawLocalFileSystem.getFileStatus(RawLocalFileSystem.java:524)

at org.apache.hadoop.fs.FilterFileSystem.getFileStatus(FilterFileSystem.java:409)

at org.apache.hadoop.fs.ChecksumFileSystem$ChecksumFSInputChecker.<init>(ChecksumFileSystem.java:140)

at org.apache.hadoop.fs.ChecksumFileSystem.open(ChecksumFileSystem.java:341)

at org.apache.hadoop.fs.FileSystem.open(FileSystem.java:766)

at org.apache.hadoop.mapred.LineRecordReader.<init>(LineRecordReader.java:108)

at org.apache.hadoop.mapred.TextInputFormat.getRecordReader(TextInputFormat.java:67)

at org.apache.spark.rdd.HadoopRDD$$anon$1.<init>(HadoopRDD.scala:240)

at org.apache.spark.rdd.HadoopRDD.compute(HadoopRDD.scala:211)

at org.apache.spark.rdd.HadoopRDD.compute(HadoopRDD.scala:101)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:306)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:270)

at org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:38)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:306)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:270)

at org.apache.spark.scheduler.ResultTask.runTask(ResultTask.scala:66)

at org.apache.spark.scheduler.Task.run(Task.scala:89)

at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:227)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617)

at java.lang.Thread.run(Thread.java:745)

Driver stacktrace:

at org.apache.spark.scheduler.DAGScheduler.org$apache$spark$scheduler$DAGScheduler$$failJobAndIndependentStages(DAGScheduler.scala:1431)

at org.apache.spark.scheduler.DAGScheduler$$anonfun$abortStage$1.apply(DAGScheduler.scala:1419)

at org.apache.spark.scheduler.DAGScheduler$$anonfun$abortStage$1.apply(DAGScheduler.scala:1418)

at scala.collection.mutable.ResizableArray$class.foreach(ResizableArray.scala:59)

at scala.collection.mutable.ArrayBuffer.foreach(ArrayBuffer.scala:47)

at org.apache.spark.scheduler.DAGScheduler.abortStage(DAGScheduler.scala:1418)

at org.apache.spark.scheduler.DAGScheduler$$anonfun$handleTaskSetFailed$1.apply(DAGScheduler.scala:799)

at org.apache.spark.scheduler.DAGScheduler$$anonfun$handleTaskSetFailed$1.apply(DAGScheduler.scala:799)

at scala.Option.foreach(Option.scala:236)

at org.apache.spark.scheduler.DAGScheduler.handleTaskSetFailed(DAGScheduler.scala:799)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.doOnReceive(DAGScheduler.scala:1640)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.onReceive(DAGScheduler.scala:1599)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.onReceive(DAGScheduler.scala:1588)

at org.apache.spark.util.EventLoop$$anon$1.run(EventLoop.scala:48)

at org.apache.spark.scheduler.DAGScheduler.runJob(DAGScheduler.scala:620)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:1832)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:1845)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:1858)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:1929)

at org.apache.spark.rdd.RDD.count(RDD.scala:1157)

at $iwC$$iwC$$iwC$$iwC$$iwC$$iwC$$iwC$$iwC.<init>(<console>:30)

at $iwC$$iwC$$iwC$$iwC$$iwC$$iwC$$iwC.<init>(<console>:35)

at $iwC$$iwC$$iwC$$iwC$$iwC$$iwC.<init>(<console>:37)

at $iwC$$iwC$$iwC$$iwC$$iwC.<init>(<console>:39)

at $iwC$$iwC$$iwC$$iwC.<init>(<console>:41)

at $iwC$$iwC$$iwC.<init>(<console>:43)

at $iwC$$iwC.<init>(<console>:45)

at $iwC.<init>(<console>:47)

at <init>(<console>:49)

at .<init>(<console>:53)

at .<clinit>(<console>)

at .<init>(<console>:7)

at .<clinit>(<console>)

at $print(<console>)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:497)

at org.apache.spark.repl.SparkIMain$ReadEvalPrint.call(SparkIMain.scala:1065)

at org.apache.spark.repl.SparkIMain$Request.loadAndRun(SparkIMain.scala:1346)

at org.apache.spark.repl.SparkIMain.loadAndRunReq$1(SparkIMain.scala:840)

at org.apache.spark.repl.SparkIMain.interpret(SparkIMain.scala:871)

at org.apache.spark.repl.SparkIMain.interpret(SparkIMain.scala:819)

at org.apache.spark.repl.SparkILoop.reallyInterpret$1(SparkILoop.scala:857)

at org.apache.spark.repl.SparkILoop.interpretStartingWith(SparkILoop.scala:902)

at org.apache.spark.repl.SparkILoop.command(SparkILoop.scala:814)

at org.apache.spark.repl.SparkILoop.processLine$1(SparkILoop.scala:657)

at org.apache.spark.repl.SparkILoop.innerLoop$1(SparkILoop.scala:665)

at org.apache.spark.repl.SparkILoop.org$apache$spark$repl$SparkILoop$$loop(SparkILoop.scala:670)

at org.apache.spark.repl.SparkILoop$$anonfun$org$apache$spark$repl$SparkILoop$$process$1.apply$mcZ$sp(SparkILoop.scala:997)

at org.apache.spark.repl.SparkILoop$$anonfun$org$apache$spark$repl$SparkILoop$$process$1.apply(SparkILoop.scala:945)

at org.apache.spark.repl.SparkILoop$$anonfun$org$apache$spark$repl$SparkILoop$$process$1.apply(SparkILoop.scala:945)

at scala.tools.nsc.util.ScalaClassLoader$.savingContextLoader(ScalaClassLoader.scala:135)

at org.apache.spark.repl.SparkILoop.org$apache$spark$repl$SparkILoop$$process(SparkILoop.scala:945)

at org.apache.spark.repl.SparkILoop.process(SparkILoop.scala:1059)

at org.apache.spark.repl.Main$.main(Main.scala:31)

at org.apache.spark.repl.Main.main(Main.scala)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:497)

at org.apache.spark.deploy.SparkSubmit$.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:731)

at org.apache.spark.deploy.SparkSubmit$.doRunMain$1(SparkSubmit.scala:181)

at org.apache.spark.deploy.SparkSubmit$.submit(SparkSubmit.scala:206)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:121)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

Caused by: java.io.FileNotFoundException: File file:/data/spark/change.txt does not exist

at org.apache.hadoop.fs.RawLocalFileSystem.deprecatedGetFileStatus(RawLocalFileSystem.java:534)

at org.apache.hadoop.fs.RawLocalFileSystem.getFileLinkStatusInternal(RawLocalFileSystem.java:747)

at org.apache.hadoop.fs.RawLocalFileSystem.getFileStatus(RawLocalFileSystem.java:524)

at org.apache.hadoop.fs.FilterFileSystem.getFileStatus(FilterFileSystem.java:409)

at org.apache.hadoop.fs.ChecksumFileSystem$ChecksumFSInputChecker.<init>(ChecksumFileSystem.java:140)

at org.apache.hadoop.fs.ChecksumFileSystem.open(ChecksumFileSystem.java:341)

at org.apache.hadoop.fs.FileSystem.open(FileSystem.java:766)

at org.apache.hadoop.mapred.LineRecordReader.<init>(LineRecordReader.java:108)

at org.apache.hadoop.mapred.TextInputFormat.getRecordReader(TextInputFormat.java:67)

at org.apache.spark.rdd.HadoopRDD$$anon$1.<init>(HadoopRDD.scala:240)

at org.apache.spark.rdd.HadoopRDD.compute(HadoopRDD.scala:211)

at org.apache.spark.rdd.HadoopRDD.compute(HadoopRDD.scala:101)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:306)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:270)

at org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:38)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:306)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:270)

at org.apache.spark.scheduler.ResultTask.runTask(ResultTask.scala:66)

at org.apache.spark.scheduler.Task.run(Task.scala:89)

at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:227)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617)

at java.lang.Thread.run(Thread.java:745)

[/mw_shl_code]

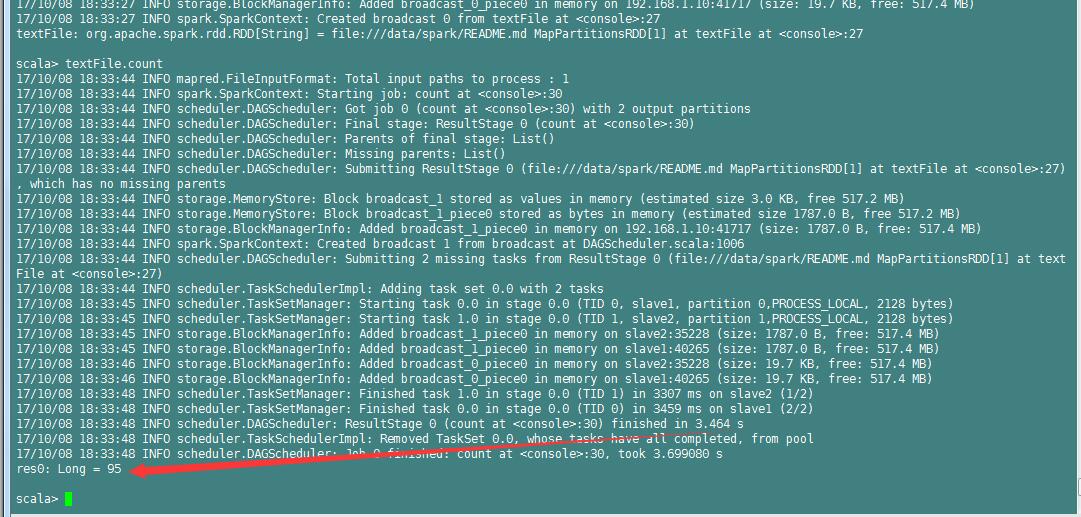

执行[mw_shl_code=bash,true]textFile.count [/mw_shl_code]

[mw_shl_code=bash,true]textFile.first[/mw_shl_code]

输出如下内容

[mw_shl_code=bash,true]scala> textFile.first

17/10/08 18:34:23 INFO spark.SparkContext: Starting job: first at <console>:30

17/10/08 18:34:23 INFO scheduler.DAGScheduler: Got job 1 (first at <console>:30) with 1 output partitions

17/10/08 18:34:23 INFO scheduler.DAGScheduler: Final stage: ResultStage 1 (first at <console>:30)

17/10/08 18:34:23 INFO scheduler.DAGScheduler: Parents of final stage: List()

17/10/08 18:34:23 INFO scheduler.DAGScheduler: Missing parents: List()

17/10/08 18:34:23 INFO scheduler.DAGScheduler: Submitting ResultStage 1 (file:///data/spark/README.md MapPartitionsRDD[1] at textFile at <console>:27), which has no missing parents

17/10/08 18:34:23 INFO storage.MemoryStore: Block broadcast_2 stored as values in memory (estimated size 3.1 KB, free 517.2 MB)

17/10/08 18:34:23 INFO storage.MemoryStore: Block broadcast_2_piece0 stored as bytes in memory (estimated size 1843.0 B, free 517.2 MB)

17/10/08 18:34:23 INFO storage.BlockManagerInfo: Added broadcast_2_piece0 in memory on 192.168.1.10:41717 (size: 1843.0 B, free: 517.4 MB)

17/10/08 18:34:23 INFO spark.SparkContext: Created broadcast 2 from broadcast at DAGScheduler.scala:1006

17/10/08 18:34:23 INFO scheduler.DAGScheduler: Submitting 1 missing tasks from ResultStage 1 (file:///data/spark/README.md MapPartitionsRDD[1] at textFile at <console>:27)

17/10/08 18:34:23 INFO scheduler.TaskSchedulerImpl: Adding task set 1.0 with 1 tasks

17/10/08 18:34:23 INFO scheduler.TaskSetManager: Starting task 0.0 in stage 1.0 (TID 2, slave2, partition 0,PROCESS_LOCAL, 2128 bytes)

17/10/08 18:34:23 INFO storage.BlockManagerInfo: Added broadcast_2_piece0 in memory on slave2:35228 (size: 1843.0 B, free: 517.4 MB)

17/10/08 18:34:23 INFO scheduler.TaskSetManager: Finished task 0.0 in stage 1.0 (TID 2) in 116 ms on slave2 (1/1)

17/10/08 18:34:23 INFO scheduler.TaskSchedulerImpl: Removed TaskSet 1.0, whose tasks have all completed, from pool

17/10/08 18:34:23 INFO scheduler.DAGScheduler: ResultStage 1 (first at <console>:30) finished in 0.117 s

17/10/08 18:34:23 INFO scheduler.DAGScheduler: Job 1 finished: first at <console>:30, took 0.161753 s

res1: String = # Apache Spark

[/mw_shl_code]

后续更新

相关文章:

日志分析实战之清洗日志小实例1:使用spark&Scala分析Apache日志

http://www.aboutyun.com/forum.php?mod=viewthread&tid=22856

日志分析实战之清洗日志小实例2:导入日志清洗代码并打包

http://www.aboutyun.com/forum.php?mod=viewthread&tid=22862

日志分析实战之清洗日志小实例3:如何在spark shell中导入自定义包

http://www.aboutyun.com/forum.php?mod=viewthread&tid=22881

日志分析实战之清洗日志小实例4:统计网站相关信息

http://www.aboutyun.com/forum.php?mod=viewthread&tid=22900

日志分析实战之清洗日志小实例5:实现获取不能访问url

http://www.aboutyun.com/forum.php?mod=viewthread&tid=22911

日志分析实战之清洗日志小实例6:获取uri点击量排序并得到最高的url

http://www.aboutyun.com/forum.php?mod=viewthread&tid=22928

日志分析实战之清洗日志小实例7:查看样本数据,保存统计数据到文件

http://www.aboutyun.com/forum.php?mod=viewthread&tid=22953

链接:http://pan.baidu.com/s/1pKXn8Ob 密码:yndp |

|

/2

/2